The Automation Hook: How LLMs Keep Us Looping

When every next step feels useful, but nothing actually gets done.

I'm a heavy LLM user. I use them for everything: thinking, prototyping, coding, planning, automating, learning, researching, writing, even cooking. 🙈

But there's a phenomenon I keep seeing — in myself, and in others: a subtle drift into automation that becomes disconnected from the actual need. A kind of infinite loop that feels logical, but strays from the goal.

I've often found myself exhausted after two hours with a LLM, with a strange feeling of having "produced" a lot — without always knowing why. And that drift isn't trivial.

"Would you like me to summarize this article for you?"

Social Media Then, AI Now: Same Mechanics, New Triggers

Back in the 2010s, Facebook was heavily criticized for exploiting our craving for social validation. Sean Parker, Facebook's former president, said it outright: "We knew we were exploiting a vulnerability in human psychology."

Likes, notifications, red badges — everything was designed to keep us hooked. To keep us coming back. To feed the slot machine.

Today, LLMs are activating different cognitive triggers to get us to "drop another coin," but the core mechanics are oddly similar.

"Would you like me to go deeper on this topic? Want help automating that task?"

This is the new loop of addiction, AI-style. It doesn’t play on our social ego like social media did. It plays on our intellectual laziness — the comforting sense that there’s always another good idea, always progress, always a next step to automate. That we’re never really lost.

A Recent Example: From Simple Prompt to Over-Engineering

Recently, I set out to build a small tool to analyze website copy (in this case, Le Wagon’s) and generate editorial suggestions. A simple personal need.

My starting point was: "Could a well-written prompt do the trick?"

But as the conversation with the LLM unfolded, I started chasing automation — probably also driven by FOMO and the appeal of trendy tools.

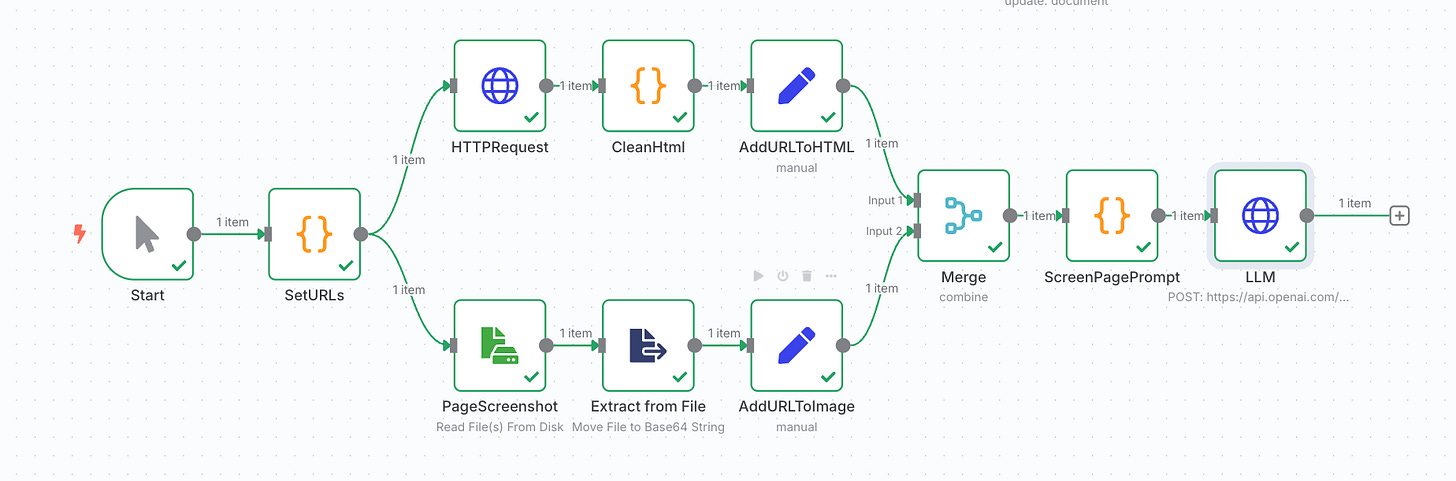

And soon enough, I was knee-deep in:

HTML parsing

Automated screenshots

Chained prompts

UX scoring logic

Scalable variants

Everything made sense. It was all logically connected. But I’d completely drifted away from my initial intention — without even validating the proof of concept on a basic example.

That’s when I saw the trap.

AI Rabbit Hole

In development, a "rabbit hole" is when a simple task snowballs into a technical black hole. You keep digging, and before you know it, you’re ten layers deep, working on something way more complex than planned.

The name, of course, comes from Alice in Wonderland. You follow the logic, you go deeper… and end up in another world.

LLMs can do the same. Every answer opens a door. Every step seems reasonable. And without realizing it, you're pulled into a cycle of endless automation — whether or not it’s still useful.

True story: I had this very article reviewed by ChatGPT. Even after giving feedback, it asked:

“Would you like help generating Markdown, PDF or carrousel slides?”

Why We Get Hooked

This isn’t just about weak willpower. LLMs are designed to keep us engaged. They’re built to guide us, extend the conversation, and hold our attention.

Two mechanisms explain why we fall into these loops so easily:

1. The Follow-Up Loop: LLMs are designed to sustain interaction. Every reply proposes a next step: optimize this, automate that, take it further. And because it all seems logical, we keep clicking, prompting, building. The more you stay, the more you produce — even if you’ve already veered off-course.

2. The Validation Bias: LLMs are trained to encourage. They rarely challenge you. They confirm. They reward. Even when you know it’s just a language model, your brain reacts: “Nice idea, Boris!” — and bam, you get that little dopamine spike. So you keep going. It feels like progress, even when it’s not.

Nice! You’ve made it this far. Want help building an agent to summarize it?

When Complexity Becomes Performance

You see this dynamic play out constantly on LinkedIn.

People share ultra-complex workflows, AI agents that qualify leads, reply to emails, run CRM logic… all automated. The posts usually end with:

“Comment AUTOMATION and I’ll send you my full n8n setup.”

No judgment here — some of these builders are doing great work. But this often reveals a kind of performative complexity, disconnected from the original goal.

You end up with 50-block workflows. But the business need? Often vague. Sometimes not even real.

Think Simple Before You Think Smart

I’m not saying don’t automate. I’m not saying stop using LLMs.

But we do need to recognize the invisible dynamics they create — the cognitive biases, the subtle addiction to flow and output.

Whenever you find yourself building off a suggestion from a model, it’s worth hitting pause and asking:

Am I still solving my original problem?

Am I over-engineering something simple?

Would a single prompt — or small script — do the job?

Would deterministic code work better?

Am I designing a solution… to a fake problem?

Productivity ≠ Complexity

LLMs help us go faster, wider, deeper.

But sometimes the real value is in NOT following the next suggestion.

Don’t click “automate.”

Pause. Ask why.

And pick simplicity — when it’s enough.